My Blue Ridge Ruby talk got stealth-posted! I’m very proud of this one; it’s a dense one, but covers a bunch of ground & still manages to find a good (if frenetic) cadence. Plus, it’s got an all-timer bit for me.

Balsamiq Pricing

Balsamiq going per-editor seating feels like a fundamental misunderstanding of what makes it so useful as a tool.

It’s designed for ANYONE to be able to use it. And, especially for small teams, you want folks to have the ability to experiment & grow!

Seat-babysitting is inherently a restrictive behavior, leading to worse outcomes. Especially in the wireframing stage. They’ve essentially created a keycard system, where only the chosen few can make actual product decisions.

Why would you make a low-fi editor that anyone can use if you’re then going to restrict who in an organization can use it?

If I had to guess, they’re trying to offset the cost of their new “AI prototyping” in a way that hides the true costs.

What a farce; and even more damning of the continually manufactured consent around LLMs. Burn it all down

A poem, or a novel, is not a puzzle to solve. It’s an experience to enjoy and think about. And there’s no answer key for that.

Blue Ridge Ruby 2026

Below are some of my rambling notes, I’m still paying off The Conference Tax for being gone for so long. The true heroes of conferences are the folks who hold the fort down at home 🫡🫡🫡.

An A+ Schedule

You should watch all of them, in order (don’t skip the lightning talks either!)

Jeremy, Joe, and Mark created a fantastic schedule. It had a clear, authorial intent with a proper cadence.

That’s why I love single-track conferences so much! You can really see the story the organizers want to tell, and how they want the conversation to be guided. And everyone has common ground for discussion.

My talk

I closed out the conference with my second talk, 5 ways to invest in yourself for the long haul .

- Slide links: https://thomascannon.me/assets/talks/brr-2026/

- Video will be forthcoming, thanks to Confreaks!

Perfectly placed in the schedule

Folks (and myself, honestly) were joking about my bad luck getting the last slot of the conference. But, to be honest, I’m honored. Its placement in the schedule felt right. We’d just spent the last two days around each other, endlessly discussing the state of the industry, lessons learned, and imagined futures. I wanted people to come away from it feeling empowered and eager to carve their own path.

“What if it’s fundamentally busted?”

One worry I had was that the talk wouldn’t click for folks due to its unconventional introduction. Reviewers kept saying they want guidance and clear orchestration on what I’m going to talk about.

There was a single summary slide at the front to outline the terrain, I knew that Jeremy was going to emcee. I wanted the focus of my talk to be on the stuff I’m doing, why I think it’s valuable, and how someone could adapt/adopt it. If I did your regular “here’s what I’m going to talk about in this talk, and how it led to result X/Y/Z,” that’s different and prescriptive IMO.

I am a white, male, DINK, newly-minted CTO at my $dayjob (@dayjob, there you go Jeremy). Going on stage, outlining my career path, and “dropping nuggets of wisdom” would be A Choice™. And I’d just experienced one of those talks a few weeks back, and it felt exactly like I feared: self-congratulating, pointless, meandering.

Also, trying to orchestrate what the talk is about might have caused people to turn their brains off. “Oh, write better commit messages and blog more?” Snooooooze, we all hear that. But walking backwards into that advice? That’s helpful!

Thanks to everyone for “getting it.”

From what I heard after I left the stage, my bet appears to have paid off. Thank you for trusting where I was going. And thank you to everyone who told me they found the talk inspiring, hopeful, useful and that it gave them ideas on how to build their own career. My door’s always open if you want to talk more about it!

Epilogue: The lightning talk stomach drop

Fun fact: Brooke Kuhlmann’s lightning talk made me sweat bullets. I closed out the conference and kept my talk’s details pretty close to the chest, so when Brooke started talking about git-lint and commit message formatting, I literally texted a friend:

ha ha I’m in danger

Thankfully, our talks actually ended up being great complements to each other! And I want to try out git-lint for myself!

The AI Roundtable

Even the most cursory glance at my writing & web presence makes it clear that I have fundamental and profound disagreements with the bubble that is labeled “Artificial Intelligence.”

I think one of the most important parts of the conference for me was the “AI Roundtable,” as hosted by Jeremy. And he deserves specific & significant credit for the way he moderated the discussion. It was focused on qualitative discussions, tightly scoped to how the environment relates to us as Rubyists. The second-order or broader factors were left at the door, not because they’re insignificant (they’re some of the most important discussions we need to be having right now), but because having the conversation from one Rubyist to another, about our language, in the same room, as humans, is a thing that we literally could not have outside a conference context.

I’m not going to go into details because it was a private gathering. But I will say that multiple perspectives were heard. We talked openly, freely, and person-to-person. And Jeremy made sure to keep that human connection in place, and for us to be mindful of the opportunity we had. And that’s very hard, stressful work. So, again, thank you to Jeremy for taking on the challenge.

Nothing was resolved, and it was never going to be in the span of 90 minutes. I’m still a major skeptic, and I am actively cheering for the bubble to burst so that we can create a more sustainable, ethical, democratic future. But I feel less alone. My perspectives have been challenged. I was able to voice some of my most pressing concerns for what our language’s future looks like. And I have even more respect for Rubyists.

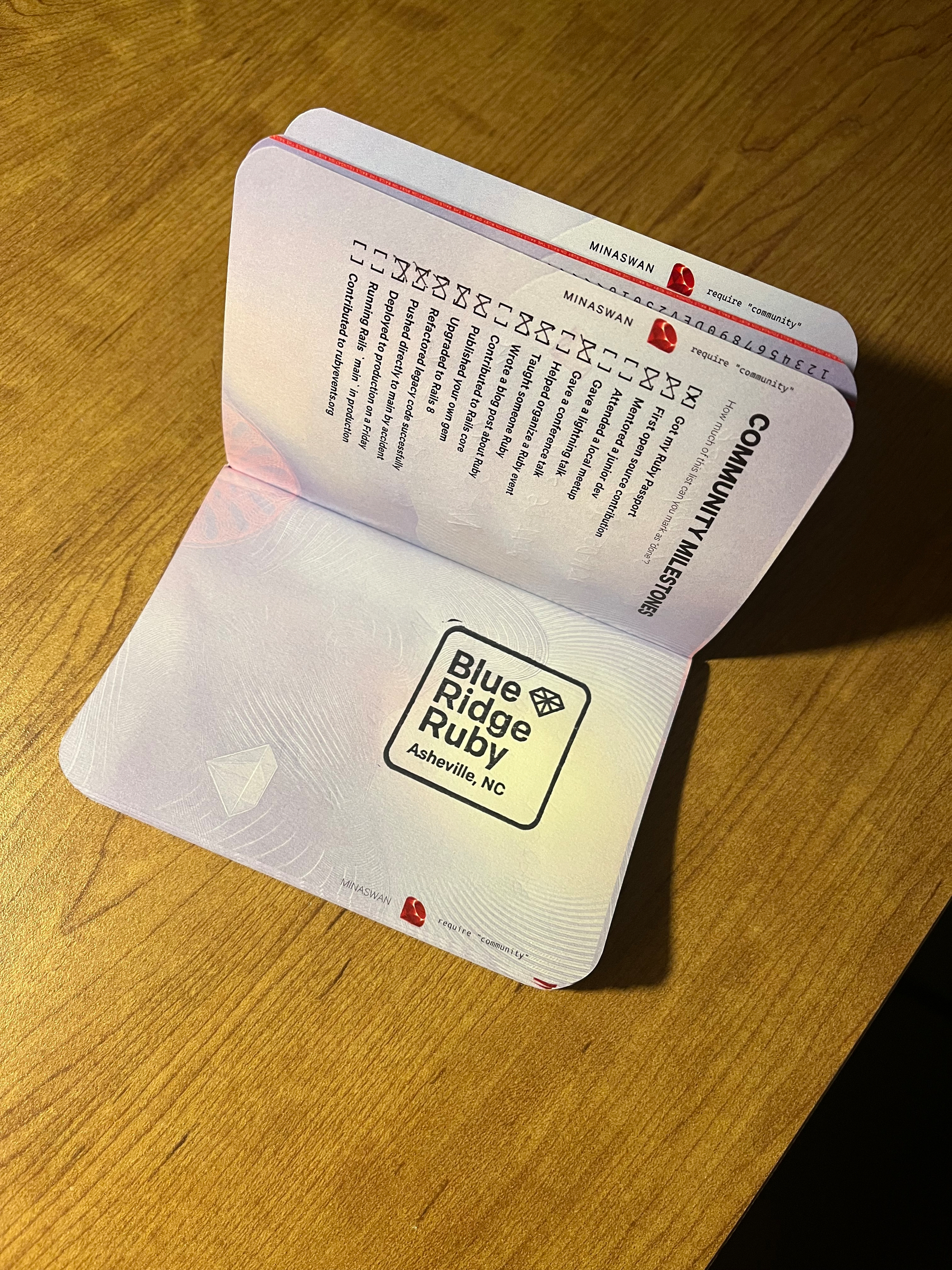

Ruby passports are great!

If you haven’t gotten a Ruby Passport yet, you should go to a conference (preferably a regional conference close to home!) and fill out the form.

Before getting mine, I thought it was a fun, whimsical idea, a bit of neat swag. But, I’m not gonna lie, I did get a genuine rush getting the passport stamped. It was this physical, concrete, real ceremony representing the time and effort I put into going to this event, and what it means for me.

PS: our “annual report” for the Ruby passport for the Blue Ridge Ruby delegation is a lot of fun to scan through!

Misc. notes

- The venue was excellent; I’m delighted it was at the YMI Cultural Center, and I hope future iterations are there as well!

- Regional conferences are excellent! Keep going to them (or go to them, if you haven’t!)

Join the dinner table!

Start your Ruby Events profile! Join, lurk, and post on the Ruby Users Forum! We’re a welcoming bunch, and it’s always more fun together.

I was just trying to look up teardowns of EWIs, and YouTube decided to drag my ass in the harshest way possible.

Today was my 3rd watch of The Wind Rises; which isn’t exactly my top Ghibli movie but has so many tiny, tragic moments that reflect what it’s felt like trying to work amidst all of…this

Another great thing about Oaken: as I rip out old models, I have so much less finagling to do because with Oaken’s seeds, I only have a small dataset that’s logically broken up. So I nuke the old seeds, work on a single test until it passes; then the whole suite.

A truly amazing gift that my dad made; a 3D woodcut of the Practical Computer logo. And the color matching is perfect too!

It deserves to be right under @jennyrjohnsonart.bsky.social’s amazing work.

The Seinfeld Transcoding Problem

Over the past 2 months, I’ve been working on digitizing our physical media library for easier access. Part of this project was digitizing and transcoding the government-issued complete Seinfeld DVD collection. Which is weird in about 4 different ways.

Basically, it acted as a great lesson in:

- The importance of good source data, both in how you structure it and what data you have available to you

- When to pull the rip cord on automation (or, more importantly, recognizing the things that you did already automate)

- Try not to over-automate and recognize when a task is inefficient, but the process is efficient.

This is all over the place!

To start, the data on the disks for the early seasons is all over the place. So, if a disk is episodes 1-4, they aren’t stored sequentially. This goes against the grain of basically every other disk I’ve transcoded in this process.

What is an episode, anyway?

Seinfeld also throws multiple curveballs in its file structure. Notably, The Stranded is listed as Season 3, Episode 10, but was filmed as part of Season 2’s production schedule. So, it’s actually on the Season 1 & 2 box set.

Pop quiz: If an episode is a 2-parter, how should it be broken up as a file?

There are 2 schools of thought:

- It’s a contiguous story, so as a single video

- Broken up into distinct episodes, since that’s how it aired and is syndicated

Seinfeld takes both approaches, based on air date. In the same season, one two-parter is two distinct video files, while another is a single video. This is based on whether it aired on the same day, or was broken up over different days. All metadata sources break them up into distinct entries.

This would have thrown a loop for both bulk-renaming and computer vision identification, and I had to make a judgement call and come up with a modified naming schema for these cases. (eg: S4.E10-11).

Make sure you’ve got good external data

My first pass was to try to find a computer vision tool to solve this, because that’s kind of a perfect use case for computer vision. And I found a python script that extracts frames from a video, searches TMDB for episodes with snapshots that match the best, and renames the files.

A great workflow in theory, but I ran into the problem that plagues computer vision: sourcing. While TMDB does have a feature where it has an API for grabbing screenshots for an episode, their dataset for Seinfeld has maybe 2 screenshots per episode. So it’s not a strong enough dataset for automated identification. When I ran a test of an episode that I had correctly ID’d, it whiffed on it.

I’d also found some potential tools to match by subtitles, but was not able to get them to work (I’m pretty sure they’re one-off vibe coded projects that worked for someone in their specific case). Plus, they were inefficient: they wouldn’t look at the actual subtitles in the file, but would transcribe the audio, which would have slowed down the whole process and been much more resource intensive. I already have the source subtitles! They don’t need to like listen and get an imperfect transcript, I have the transcript! It would be a lot more efficient to compare text against text, so this didn’t inspire confidence in the project.

Even though these approaches failed, they did help in the final workflow

How I processed all these videos in a few hours

The final workflow ended up being:

-

GUI:

- Multiple windows of Windows Explorer:

- One for the tabs of raw videos from the DVDs

- One for the renamed, identified videos that will be transcoded

- A browser with TMDB and seinfind.com (which I’d found while looking for subtitle sources) open

- VLC in a small window in the corner

- Multiple windows of Windows Explorer:

- Workflow:

- Check the physical disk for the corresponding folder (Season 3, Disk 2) to get the episode range

- Bulk-rename the files, based on their “created at” timestamp

- Open each video, scrub for frames, scenarios, or identifying phrases

- Check TMDB/Seinfind with that information, get the match

- Rename the file, onto the next one

The importance of structuring data

This workflow was possible because rather than having 23 episodes in a season, or 180 episodes to work through, the data was broken into 5-6 episodes using a stable identifier: The disk they were from. As I was ripping each disk, I made sure that the files were put into directories and I made sure that the directories matched the the disc names. For example: “Season 4, Disk 3” and then everything inside that DVD would go in there for later processing.

Then, the problem of “oh, which season, which episode am I looking at?” was much more efficient because I’m only looking at those 4-6 episodes at a time. With the batch renamer, I could take a best-guess pass and sort from there. Essentially, I automated that part of the process with:

For this part of the operation, these are episodes 4 through 10.

After that, manual verification kicked in. When the episodes were out of order, I renamed the correct match with a suffix, then jumped to the incorrect episode and identified it.

Which, is just a manually implemented sorting algorithm. Rather than going through all 23 episodes, which would be really intensive for a slow or “differently efficient” CPU (my brain), I am breaking it up into chunks of six because it’s much easier to work in those chunks. And because those chunks will be sequential, I will get the ordered list of episodes.

Task inefficient, Process efficient

This endeavor a good example of when someone is task inefficient, but the overall process is efficient. If you said:

Me, the human, is going to open each video, scrub through it, identify the episode, and sort 180 episodes of television

That feels like a horribly inefficient and daunting process. And at the task level, its inefficient! However, at the process level, it is more efficient because of the work I did to structure the source data, the lessons & tools I found along the way, leveraging existing automations, and the nature of probability.

Having good source data and preparing it to be as workable and easy as possible despite its imperfections is what unlocked the sorting algorithm.

The next part was knowing my tools well enough to automate the things where it makes sense and then using human discernment to finish the rest of the job. Extracting the DVD data, using a batch renamer, and auto-organizing files into disk-based directories are all things I’ve already done to automate the process. TMDB and Seinfind ended up being crucial resources for pattern matching. And because I’m comfortable with my window manager and keyboard shortcuts, I built out a whole bespoke GUI just for this process, with zero code or extra work!

At the process level this approach ended up being much more efficient, because rather than trying to find all the edge cases and particulars of this unstructured data (a series of flat files) and translate into software or prompts, I wrote the program on the fly in my brain. I identified the patterns, used them to build out a structure, and got into a flow state.

Also, I’m only doing this once. I’m not regularly transcoding & organizing nine 23 episode seasons of a beloved broadcast television show that did not logically organize its data on disk. I mean, if I end up doing The West Wing, Deep Space 9, or some other giant set it might make sense to expand these scripts to make the process faster. But, I spent 3 hours trying to get them up-and-running, and the manual work for Seinfeld took 3-4 total.

Computer vision and transcription have very useful applications, but they require strong datasets. It also requires understanding the data, trying to make the best sense of it, and human discernment.

I feel like in the quest to overautomate everything or write custom software for everything, you end up creating more inefficiencies and wasting more time, where a human can get into a flow state and better adapt to the complexities and problems that certain datasets present.

Replaced the battery on my iPod and only busted up the case a little. These buggers are a nightmare.

After finishing The Rose Field, I am even more convinced that lauded writers need editors trained on them like snipers at all times. 🙃